voice.md is the file in our content engineering practice that captures how a specific person actually writes. Not a tone-of-voice guideline. Not a voice clone in the audio sense. Not a brand voice doc full of adjectives. A documented spec that gets loaded as system prompt input.

The term "voice profile" gets stretched in three directions. Marketers hear brand voice guidelines. Engineers hear voice cloning models. Most B2B readers hear tone of voice. None of those are what voice.md is. voice.md is closer to a configuration file than a style guide. It is a structured artifact in a content engineering pipeline.

What does voice.md actually contain?

A voice.md is structured around patterns, not aesthetics. The sections vary by person, but the shape is consistent.

Sentence rhythm. Average sentence length, variance between short and long, where the person stacks short sentences for emphasis, where they let one breathe. This is the hardest layer to fake and the layer most generic AI output breaks first.

Vocabulary they use and never use. Words that show up disproportionately in their writing, paired with words they avoid by reflex. "Specific" but never "leverage." "Honest" but never "transparent." "Operator" but never "ninja." Both lists matter equally. The negative list is what stops AI defaults from leaking into output.

Argument structure. How they build a case. Some founders open with the conclusion, then back-fill evidence. Some lay groundwork and land the point at the end. Some reason from named examples; others from numbers; others from analogy. This pattern is consistent across a person's writing.

Dos and don'ts. Concrete rules: no rhetorical questions, no motivational closes, no "imagine if" hypotheticals, no transition phrases that signal a content template. These are extracted from samples, not invented.

Reference points. The domains a person draws analogies from. A founder with a political-comms background pulls different references than one who came up in engineering. The reference shelf gives output a specific texture that reads as authentic.

Voice and register. First person where the person is the authority. Third person otherwise. Where the person uses "you" vs "the founder" vs proper nouns. Whether they use contractions. Whether they swear and how often.

Each section has rules at the top and three to five examples from the person's own work underneath. The examples are what make the spec usable. A rule without an example is a wish.

Why do generic brand voice docs fail at AI content?

A brand voice document written for humans describes aesthetics. "Confident but warm." "Direct but never blunt." "Data-driven, with a human edge."

Those phrases are interpretable by a senior writer who has been on the team for a year. They are nearly useless to a language model. The model has no prior on what "warm but direct" means for this specific founder. It has billions of priors for what "warm but direct" means in general, which is the problem. Generic gets you generic.

A voice profile written as voice.md is different. It tells the model: paragraphs are 2 to 4 sentences, never 6. Open with an observation, not a preamble. Close with a stance, not a question. Never use these twelve words. Always use first person when describing direct experience. The model can act on that. A senior writer reading the same instructions could too, but the point is the model can.

This is the gap between brand voice as a marketing artifact and voice as an engineering input. Same word, two different jobs.

How do you build a voice.md file?

Building a real voice.md takes samples, not descriptions.

Start with twelve to twenty pieces of unedited content from the person. LinkedIn posts, email threads, recorded conversation transcripts, voice notes, long-form writing. The more varied the format, the cleaner the pattern extraction. For founder-led marketing specifically, LinkedIn posts and conversation transcripts are the highest signal. For a founder without much published content, transcripts of recorded thinking sessions become the primary input.

From those samples, the patterns above get extracted. Each section is documented with rules at the top and examples underneath. Where a rule is fuzzy, the examples carry it. Where the rule is clear, the examples back it up.

The first version is intentionally rough. You generate three to five posts from it using whatever AI tool sits in the production pipeline. The founder reads them and reacts. Where they say "I would never write that," that's a missing don't. Where they say "this is fine but bland," that's a missing positive pattern. Each correction updates the file.

This is where AI content systems become practical. The voice.md is a component in the system, not a one-time deliverable. It refines through use.

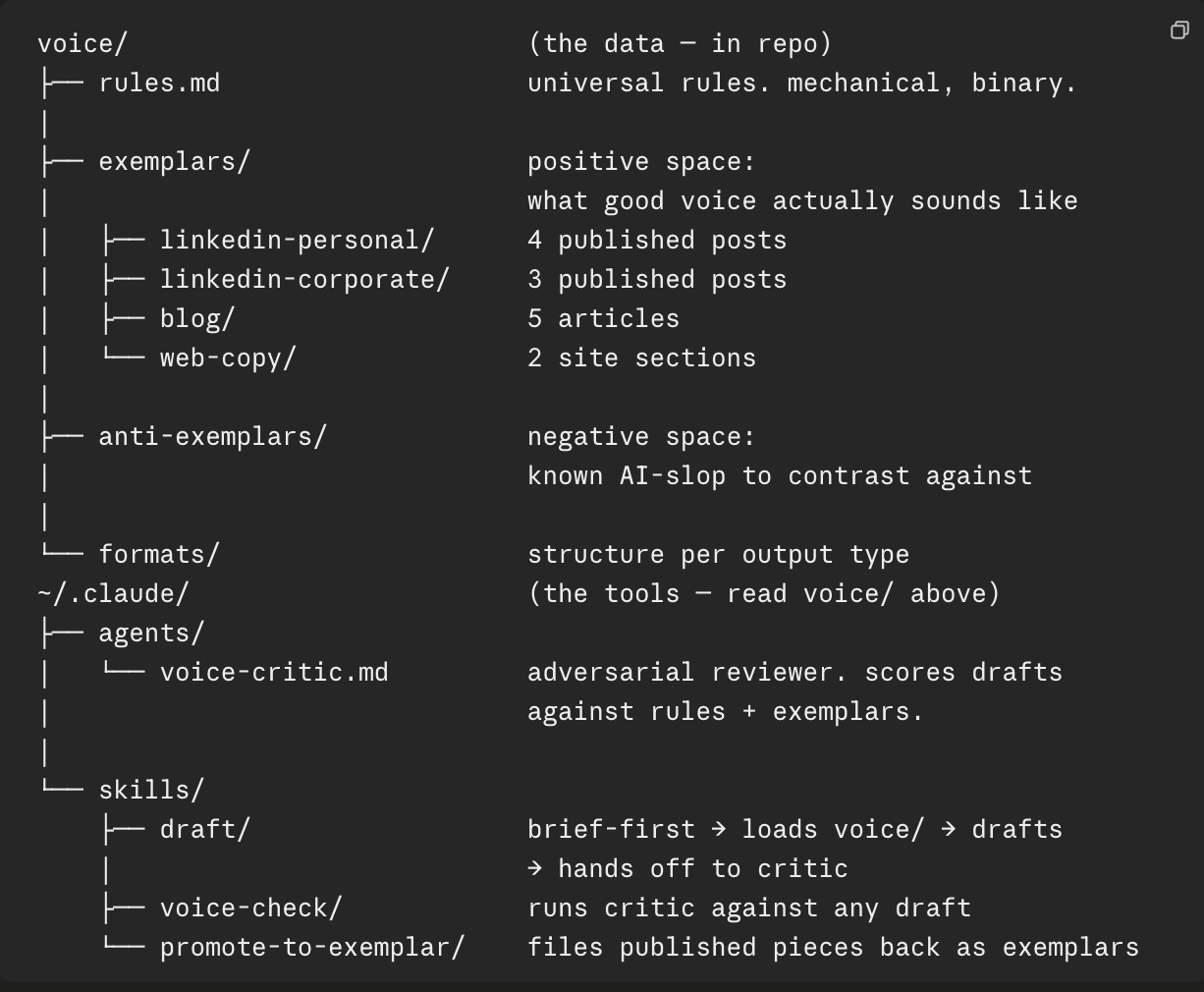

When voice.md grows into a folder

For most cases, a single voice.md file is enough. One person, one channel, one format. The file does the job.

The shape changes when you scale. More channels. More content formats. Different registers. The same person sounds different in a cover letter than in a personal LinkedIn post than in a brand homepage hero. A single file flattens those differences. A folder doesn't.

What the folder looks like in practice:

Three additions to the single-file version:

Format files carve out per-output structure rules. linkedin-personal.md has hook patterns and length targets that don't apply to a blog post. blog.md has frontmatter schema and SEO/GEO requirements that don't apply to LinkedIn. The universal voice rules still live in rules.md. Format files add what's specific.

Exemplars are real published pieces, filed by format. They carry voice signal that rules can't articulate. When the AI catches the rules but the output still doesn't sound right, the exemplars are what close the gap.

Anti-exemplars are known AI-slop drafts marked as not-me. They give the system something concrete to contrast against. Without them, the AI can technically pass every rule and still produce voice-rule-compliant output that isn't the writer's voice.

The single file describes voice in negative space (what it's not — banned words, banned punctuation, banned structures). Exemplars and anti-exemplars fill in the positive space. Both layers matter; rules alone tend to drift over time.

The folder version isn't necessary for everyone. It earns its keep when there's enough surface area that a single file can't carry the load — multiple channels, multiple registers, multiple writers using the system. The simplest version of the spec that does the job is still the right call.

How does voice.md get used in production?

voice.md loads as system prompt input on every AI step that touches the person's voice. Drafting, rewriting, summarizing, hook generation, headline writing. Any step that emits text in the person's voice gets the same file.

Because it lives in the repo as a markdown file, it propagates cleanly. A new workflow that needs the founder's voice imports voice.md the same way the existing workflows do. A change to the file flows to every workflow on the next run. There is no syncing across tools, no copy-paste between prompt fields, no drift between platforms.

This is what makes it an engineering artifact. It's version-controlled. It has a commit history. Two people can review it. A specific change can be traced to a specific prompt revision and a specific output improvement. None of that is true for a Google Doc full of brand voice adjectives.

In a content system rather than a ghostwriter setup, the voice.md is the asset that makes the system reusable. The ghostwriter equivalent is a senior writer's intuition, which can't be loaded into a different workflow or scaled to a second format without rebuilding from scratch.

What are the limitations of voice.md?

A voice.md is a spec, and specs have limits.

It is only as good as its inputs. Twenty good samples beat fifty mediocre ones. A founder who writes carefully on LinkedIn but ships internal Slack messages full of voice-of-someone-else gets a noisy file unless the inputs are filtered.

It drifts. A person's thinking shifts. New reference points enter, old ones fade. The voice.md from six months ago will start producing content that sounds like the person used to sound, not how they sound now. Refresh every three to four months, or any time the founder's positioning meaningfully shifts.

It cannot capture in-the-moment reactions. A genuinely surprised take on news that broke yesterday, a specific frustration about a specific client problem, a fresh opinion forming in real time. Those need the person in the loop. A voice.md gets you to a faithful baseline. The founder closes the gap on anything that requires their actual presence.

And it cannot fix a thin point of view. If the person's writing samples are vague, the voice.md will be vague. The file documents what is there, not what should be. Voice fidelity assumes there is a voice to be faithful to.

Why is voice.md engineering, not branding?

The case for treating voice as an engineering artifact rather than a brand asset comes down to where it has to work.

A brand voice doc lives next to the visual identity in a folder. It gets referenced when a writer is briefing an agency or onboarding a junior team member. It is read maybe a dozen times a year.

voice.md gets read on every content run. By a model. With no human interpretation layer. The constraints that apply to engineering specs apply to it: clarity, version control, examples paired with rules, rules paired with rationale, ownership, refresh cadence. It belongs in the repo, not in a marketing folder.

That framing changes how it gets maintained. A brand voice doc that is six months stale is a minor problem. A voice.md that is six months stale is producing content that drifts further from the founder every week. Treating it as code, with a maintenance cadence, prevents that decay.

For a wider view of where voice.md fits in the full content engineering stack, see the pillar guide.

Common questions.

What is voice.md?

voice.md is a structured markdown file that captures how a specific person writes: sentence rhythm, vocabulary they use and never use, argument structure, dos and don'ts, and reference points. It is used as system prompt input for AI content generation. It is not a tone-of-voice document, not a brand voice guideline, and not a voice clone in the audio sense.

How is voice.md different from a brand voice document?

Brand voice documents describe aesthetics: confident, conversational, data-driven. voice.md documents patterns: paragraph length, sentence construction, banned vocabulary, argument shape. Aesthetics are useless to a language model. Patterns are not. That difference is why generic brand voice docs fail at AI content production.

How do you build a voice.md?

Start with twelve to twenty unedited samples from the person, the more varied the format the better. Extract patterns: sentence rhythm, recurring constructions, vocabulary they use and never use, how they build arguments, the reference points they pull from. Document each section with rules and examples. Test against AI output and refine over the first four to six weeks of production.

How is voice.md used in AI content workflows?

It loads as system prompt input alongside the brief. Any AI content step in the pipeline that touches the person's voice (drafting, rewriting, summarizing) gets the same voice.md so output stays consistent across formats. It lives in the repo, it is version-controlled, and it propagates through every workflow that produces content for that person.

What are the limitations of voice.md?

It is only as good as its inputs. It drifts after a few months as the person's thinking shifts and old reference points fade. It cannot capture in-the-moment reactions to news or events, which is why human review still matters. Treat it as a maintained spec: refresh every few months, flag drift when the founder rewrites specific patterns, version it like any other file in the system.

Should voice.md be a single file or a folder?

For one person on one channel, a single file is usually enough. The folder structure earns its keep when there are multiple channels or registers — a cover letter, a personal LinkedIn post, and a brand homepage hero all sound different even from the same writer. The folder adds format-specific files for per-output structure rules, exemplars (real published pieces per format) for voice signal that rules can't articulate, and anti-exemplars (known AI-slop) to contrast against. The simplest version that does the job is still the right call.